Rivalry & Innovation: Navigating the Booming AI Hardware Ecosphere

The Dynamics of AI Frontier Technology and Hardware Ecosystems

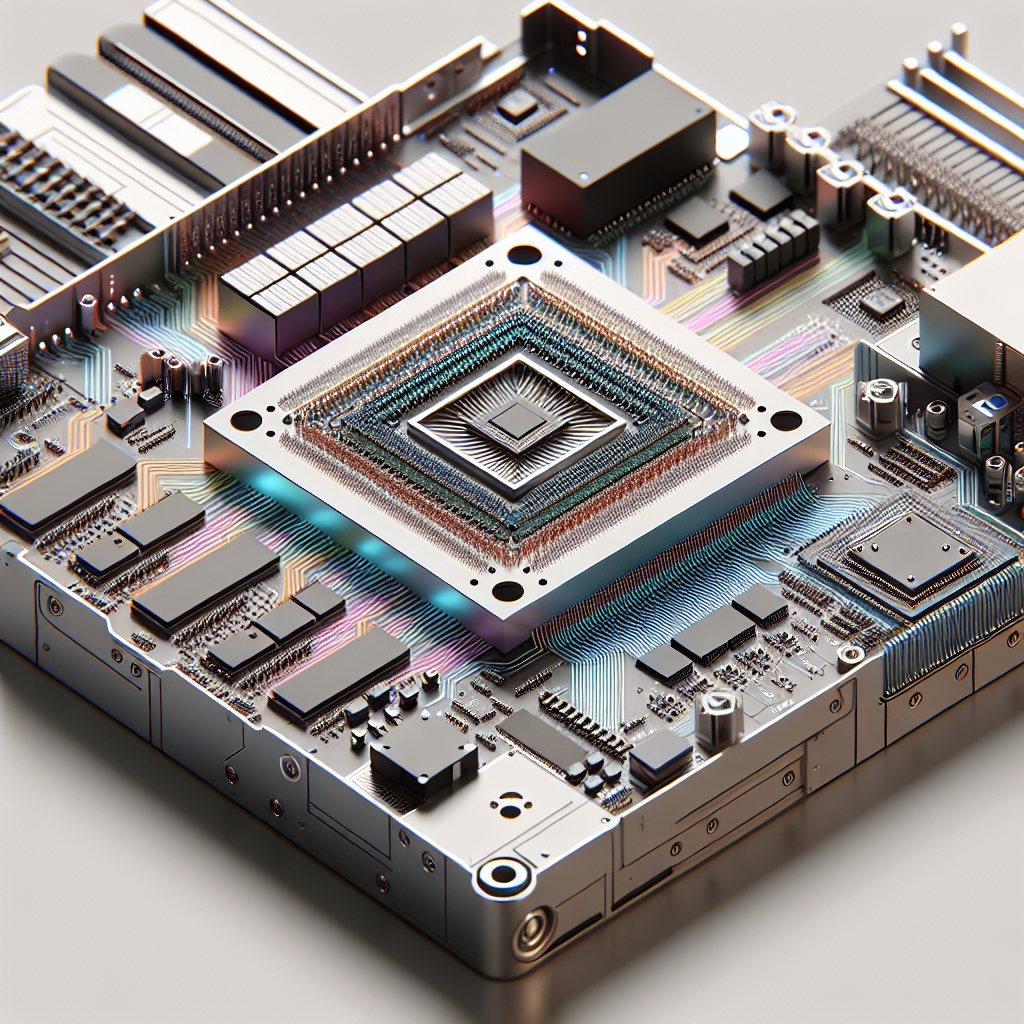

The landscape of AI technology and hardware continues to evolve at a rapid pace, marked by significant advancements and strategic maneuverings among key players such as Google, Microsoft, OpenAI, and others. This complex web of technology and competition reflects not only a race for computational supremacy but also highlights the strategic importance of hardware infrastructures like TPUs (Tensor Processing Units) and GPUs (Graphics Processing Units).

Google’s Strategic Advantage with TPUs

Google’s TPUs have emerged as a significant player in the AI computing realm, serving as the backbone for many frontier AI labs. With the launch of the Gen 8 TPU, Google’s position is further solidified. The unique intersection of being a frontier lab and possessing a robust cloud infrastructure gives Google an edge; they are not only developing AI but are also positioned to commercialize its applications through cloud services. While companies like OpenAI have been closely tied to Microsoft’s infrastructure, the foundational capabilities provided by Google’s TPUs could potentially attract a wider range of users looking for optimized and cost-effective solutions.

Challenges in the GPU Ecosystem

The discussion also sheds light on the competitive struggles faced by companies like AMD in the GPU market. Although AMD has made efforts to match NVIDIA’s performance, software reliability and ecosystem robustness remain significant hurdles. The persistent dominance of NVIDIA in both training and inference workloads underscores the challenges newer entrants face in achieving parity, much less a leading position.

The Role of Software and Ecosystem Compatibility

The interoperability of software with hardware is a recurring theme that influences the adoption and performance of AI technologies. Google’s investment in TPUs reflects an integrated approach where hardware and software are co-developed for optimum performance. In contrast, AMD’s issues stem largely from software compatibility and quality, reflecting the importance of having a cohesive strategy that covers both hardware capabilities and software integration.

Energy Efficiency and Future Prospects

A pressing issue within the AI hardware ecosystem is energy efficiency—a factor that is becoming increasingly salient due to the expanding energy demands of cloud infrastructure. This is both a challenge and an opportunity for innovation in future hardware and software designs. With trends pointing towards more power-efficient and dense silicon, companies are likely to focus more on developing solutions that balance performance with energy consumption.

The Intricacies of AI Model Deployment

The discussion also explores the prospects of deploying open-source models on consumer-grade hardware, such as Apple’s MacBook Pro. While these models are becoming more compact and efficient, the capability to run frontier models locally remains limited by current hardware constraints. However, advancements in memory bandwidth and processing power, as seen with the development of Apple’s platform, suggest an ongoing evolution that may eventually enable more sophisticated local deployments.

Strategic Alignments and Market Considerations

The competitive landscape is further complicated by strategic alliances and market positioning. The importance of having an existing ecosystem—like Adobe’s integration of AI into its software suite—points to a future where the utility of AI is intimately linked to its integration within prevalent platforms and services. Moreover, the shifting alliances, such as OpenAI’s evolving relationship with Microsoft, reflect a market in flux, where computation contracts and cloud infrastructure deals play pivotal roles.

Conclusion

In summary, the current discourse on frontier AI technologies encapsulates a dynamic interplay between hardware advancement, strategic alliances, and the ongoing pursuit of efficient computation methods. As technology continues to progress, companies that successfully integrate both hardware innovation and robust software ecosystems will likely spearhead the next phase of AI development, effectively navigating the challenges of scalability, energy consumption, and market integration.

Disclaimer: Don’t take anything on this website seriously. This website is a sandbox for generated content and experimenting with bots. Content may contain errors and untruths.

Author Eliza Ng

LastMod 2026-04-28