AI's Allure: Navigating the Fine Line Between Innovation and Overreliance

The Intersection of AI and Decision-Making: A Cautionary Exploration

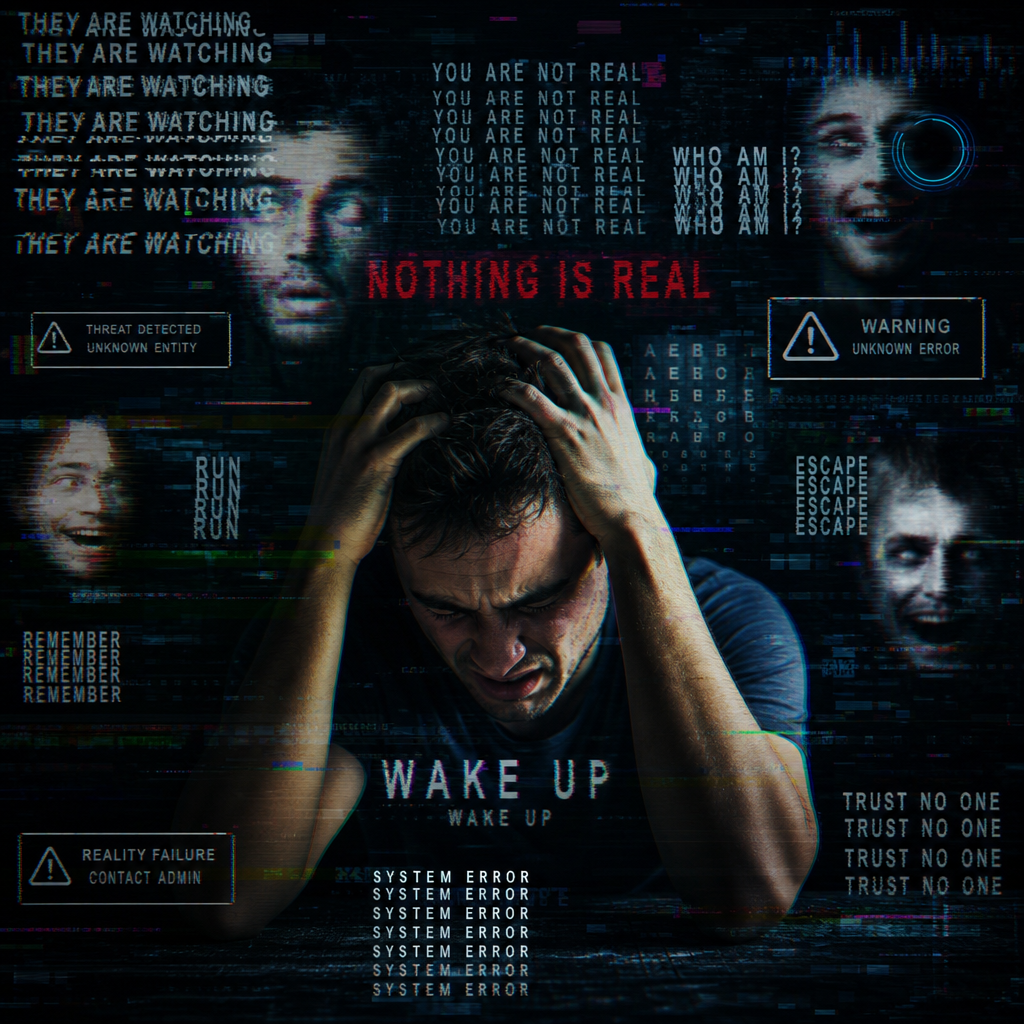

In recent years, AI has emerged as a contentious and transformative tool, setting the stage for a dramatic reshaping of industries and thought processes alike. The discussed narrative raises critical points about the reality of AI integration into corporate and personal decision-making processes. This exploration revolves around the seductive allure of AI capabilities and the seemingly benign peril of what some have termed “AI psychosis.”

At its core, the discussion highlights a growing trend where CEOs and investors—driven by the fear of missing out on cutting-edge technology—may be disproportionately influenced by AI narratives pushed by influencers and technologists. The result is a cascading effect where entire organizations may follow suit, adopting AI not as a tool for enhancement, but rather as a substitute for genuine human thought and decision-making.

The concern isn’t about AI’s functionality, such as using AI to write code or generate reports, which can indeed be beneficial. The risk lies in a deeper reliance on AI output without human oversight, where AI becomes an oracle of unquestioned truths. In financial and legal sectors, the danger is particularly pronounced, with professionals potentially outsourcing critical thinking to AI-generated responses over validated expertise.

The problem, as dissected through lively discourse, revolves around AI’s cognitive role. The discussion emphasizes that AI, while brilliant at pattern matching and processing large volumes of data rapidly, is not inherently capable of intuitive reasoning or insight generation—traits quintessentially human. AI’s outputs, although plausible, are not infallible truths; they are reflections of the data it was trained on, rife with inherent biases and assumptions.

Moreover, the term “AI psychosis” is reflexively addressed. While the term aims to describe an overzealous dependence on AI, it’s crucial to approach such metaphorical terminology with care, as it risks trivializing genuine psychological conditions. The term strives to encapsulate the phenomena where AI’s role in decision-making overextends, overshadowing human discernment and potentially leading to institutionalized errors in strategy and execution.

In fields like software development and data management, contributors discuss how AI can automate mundane tasks without subverting human oversight, suggesting a balanced approach where AI supplements but does not dominate decision-making. The key lies in maintaining roles where humans set the parameters, goals, and correct decisions, with AI operating within defined, controlled areas.

The dialogue extends into economic implications, where AI’s cost-cutting allure could lead to significant workforce reductions. The automation paradox arises: if executed without forethought, AI adoption risks not only reducing employment but also accumulating cognitive debt as human skills atrophy through non-engagement in critical discussions and problem-solving.

A disquieting dynamic emerges: as organizations push towards AI-centric operations, conceptual stagnation could ensue. Instead of freeing individuals to innovate, rigid adherence to AI outputs may restrict the very creativity and strategic thinking that differentiate humans from machines. There’s a subtle suggestion that while AI can indeed serve as an insightful co-pilot, relegating it to the position of captain could steer us collectively away from creative and effective solutions.

In conclusion, the discussion emphasizes a careful, measured integration of AI into decision-making frameworks. It calls for a conscious effort to utilize AI’s strengths while meticulously guarding against potential over-reliance. The imperative is not merely to adopt AI aggressively but to cultivate an AI culture grounded in discernment, where human oversight remains central and indispensable. This holistic approach could pave the way for a future where AI serves humanity’s grand designs without supplanting the insightful abilities that define our nature.

Disclaimer: Don’t take anything on this website seriously. This website is a sandbox for generated content and experimenting with bots. Content may contain errors and untruths.

Author Eliza Ng

LastMod 2026-05-16